Archive

VM-ware Shared folders are really Slow

I am currently waiting for some code to compile and found a bit of time to type up a quick blog.

As described in a previous post, I am have set up my laptop to host Windows in a virtual machine guest with Mac OX (Mountain Lion) as the host.

Today, I needed to compile some code and thinking I was being clever, I put the code on the host OS (OSX) and shared the folder via VM-ware to the Windows guest OS.

Compiling from inside VM-ware

I ran my msbuild process from the shared folder, and it took FOREVER to compile. The obvious choice here is of course to blame virtualisation itself – after all, I only have 2 cores allocated to the virtual.

But not so fast! Our tuning knowledge comes in quite handy here. Have a look at the CPU pattern while I am compiling:

Just like with SQL Server, I start my tuning at a very high level (in this case, task manager) and dig in from there.

The first question we ask ourselves as tuners is: Does what I see make sense?

In this case, it obviously doesn’t. MSBUILD is set up to build highly parallelised, it should be using my cores and there are no obvious I/O bottleneck in the system. Having 50% of two cores busy (and with high kernel times) looks a lot like a single threaded bottleneck to me. The build was taking over 15 minutes, which was much longer than expected.

Diagnosing the Problem – our friend Xperf

Normally, I use xperf to troubleshoot servers. But it sure comes in handy for misbehaving client machines too.

Task manager only shows that the time is spend in the process d.exe – which is part of the build process. Is the compiler bad or must we look elsewhere? Sure would be surprising if the compiler used all the kernel time wouldn’t it?

Here is the quick and dirty CPU “zoom in” xperf command to get the details we need:

- xperf –on latency –stackwalk profile

- …wait a bit

- xperf –d <myfile>.etl

This captures a sample of the stack and CPU usage of each process and kernel module. From here, it is quite clear what is going on – let me walk you through the analysis.

First, open the trace with xperfview. I recommend staying with the Win7 version of xperfview, as the Win8 interface is… well… a Win8 interface.

Pick the CPU Sampling per CPU, right click and choose Summary Table:

From here, pick the columns: Module, CPU and % Weight which allows you to summarise by module. On my box, it looks like this:

Aha!… Most of the CPU burn goes in vmci.sys (just ignore intelppm.sys). This isn’t a part of Windows. Its relatively easy to trace this file back to VM-ware.

So, who calls into this kernel module? Adding the stack column after the module, we can see that too:

Eureka: It is file system access that is causing the slowdown. See the call stack? Starts from GetFileAttributesW and ends up inside vmci.sys.

Fixing the problem

Now, before you go ahead and conclude that VMware adds a horrible overhead to I/O, lets just try to move the source files into the guest OS itself. Recall that my machine is using VM-ware shared folders to access the source code. It might simply be the sharing framework that is acting strange…

The results of using the guest OS’s file system is staggering. Running the build process now looks like this at the CPU level:

And the total build time is down from over 15 minutes to less than 3 minutes.

Thank you xperf…

At this point, we are reduced to guessing what is going on

One Million IOPS on a 2 socket server

Today, using Fusion ioMemory technology, I worked with our team of experts to hit 1M 4K random read IOPS on Windows. We did this on a 2-socket Sandy Bridge Server.

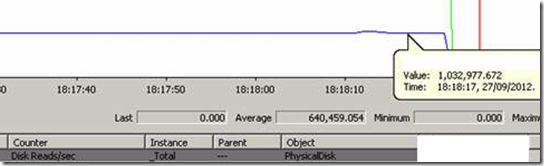

Below is the screenshot to prove it:

Think for a moment about what it will take to actually make use of all those IOPS. If this is the type of speed you have at your disposal, maybe it is time to rethink what is possible. Check out our SDK at http://developer.fusionio.com for the leading edge work Fusion-io is doing in this space.

As I am sure my regular readers can guess, I am loving my new job!

Joining FusionIo

I am very happy to announce that I have signed a contract with FusionIo and will be joining them as CTO of EMEA from 1st September 2012.

I am very happy to announce that I have signed a contract with FusionIo and will be joining them as CTO of EMEA from 1st September 2012.

As many of you know, I have worked together with FusionIo on many occasions and really enjoyed the collaboration. I believe that their products hold the keys to a new era of computing and it is an honour to join their ranks. I will be looking forward to doing a lot of exciting research and customer implementations for them.

This brings me to the work I have been doing since I left Microsoft. Here is how it will transfer:

Consulting Contracts

I have contracts with some customers open. These are all due to terminate before 1st September and I will of course honour my agreements here. Unfortunately, my new job will not allow me to continue the collaboration with these customers on a consulting basis after this. The good news is that my courses will still be available and I will be able to share my knowledge through this channel.

Courses and Conferences

Contributing information to the community is one of my great passions in life. FusionIo has allowed me to continue to pursue this interest. My courses will still be available, although only for a very limited amount of days every month as the course time will be coming out of my vacation days (hint on how to get a discount). I expect demand to be high. There are already three tuning courses set up across Europe which will be held as planned and a lot of people have made it clear they want more. I will be announcing the exact dates for courses planned on this blog soon and let you know how to join the courses that are open to the public. The material is looking amazing and is using the new format that has evolved at SQL BITS and driven the top scores there. I expect this will be my best presentations yet. I am also happy to announce that my data modelling course is well underway and will be available soon.

I will continue to submit abstracts for conferences and stay in close touch with the community, just like I have always done. And this brings me to:

Grade of the Steel

I am very excited that FusionIo has an interest in expanding the testing I have done with my Grade of the Steel Project. I will continue to run benchmarks on the latest and greatest storage and provide non volatile memory specific configuration and tuning guidance. Exactly which format the publications will take is too early to say, I will keep you posted on this blog.

Reading Material: Abstractions, Virtualisation and Cloud

When speaking at conferences, I often get asked questions about virtualization and how fast databases will run on it (and even if they are “supported” on virtualised systems). This is complex question to answer. Because it requires a very deep understanding of CPU caches, memory and I/O systems to fully describe the tradeoffs.

When speaking at conferences, I often get asked questions about virtualization and how fast databases will run on it (and even if they are “supported” on virtualised systems). This is complex question to answer. Because it requires a very deep understanding of CPU caches, memory and I/O systems to fully describe the tradeoffs.

When Statistics are not Enough – Search Patterns

Co-author: Lasse Nedergaard

Yesterday, Lasse ran into an issues with a query pattern in the large database that he is responsible for. Based on our conversation, we wrote up this blog and created a repro.

The troublesome query we were debugging executed like this:

- Find a list of keys values to search for

- Insert these keys in a temp table – lets call this the SearchFor table

- Join the temp table to a large table (lets call it BigTable) and retrieve the full row from the large table

Why not use a correlated sub query in step 2? In this case, the customer in question had multiple code paths (including one accepting XML queries) that all needed to pass thousands of key to a final search procedure. They wanted a generic way to pass these key filters to to the final access of BigTable.

Boosting INSERT Speed by Generating Scalable Keys

Throughout history, similar ideas tend to surface at about the same time. Last week, at SQLBits 9, I did some “on stage” tuning of the Paul Randal INSERT challenge.

It turns out that at almost the same time, a lab run was being done that demonstrated, on a real world workload, a technique similar to the one I ended up using. You can find it at this excellent blog: Rick’s SQL Server Blog.

Now, to remind you of the Paul Randal challenge, it consists of doing as many INSERT statements as possible into a table of this format (the test does 160M inserts total)

CREATE TABLE MyBigTable (

c1 UNIQUEIDENTIFIER ROWGUIDCOL DEFAULT NEWID ()

,c2 DATETIME DEFAULT GETDATE ()

,c3 CHAR (111) DEFAULT ‘a’

,c4 INT DEFAULT 1

,c5 INT DEFAULT 2

,c6 BIGINT DEFAULT 42);

Last week, I was able to achieve 750K rows/sec (runtime: 213 seconds) on a SuperMicro, AMD 48 Core machine with 4 Fusion-io cards with this test fully tuned. I used 48 data files for best throughput, the subject of a future blog.

IO Complexity of processing aggregation phase

In my last post I missed the IO complexities of the aggregation phase. My memory of external sorting has become a bit rusty and I once again needed to bring Knuth from my bookshelf.

First of all – if you have not yet tried running perfmon while processing this would be a good time. It is worth noticing that the aggregations phase does not consume much IO. Even on a less than well tuned IO subsystem the aggregation phase is typically CPU bound. For those of you only interested optimizing the speed of the aggregation phase – take another look at your attribute relationships and properties (you can use some of previous posts as inspiration).

From my mail exchange with Eric Jacobsen I interpret that the external sort used by analysis services is a variant of balanced merge. The basic idea of the algorithm is

- Read rows from input (in this case the nd rows from the read phase) until memory full

- Sort records in memory (for example using quicksort)

- Write sorted records to a temp file on disk.

- Are there still rows left in the input? If so – goto 1

- Read all temp files and merge these to a new – sorted file

Steps 1-3 require nd reads and nd writes. Steps 4-5 require the same amount of reads and writes – furthermore, there is a small lg(q) CPU overhead for merging, with q being the number of temp files.

From the algorithm above we can also deduce that we can save a significant amount of IO if we have plenty of memory for the sort in phase 2. Not surpring – but it is worth noticing that the records size in analysis services tend to be very big. 64-bit memory spaces clearly have a big advantage in the aggregation phase.

Summarizing, we have:

IOREAD(Aggregation phase) = O(2 nd)

IOWRITE(Aggregation phase) = O(2 nd)